java-ceph 14.2.10 aarch64 非集群内 客户端 挂载块设备

推荐 原创集群上的机器测试

706 ceph pool create block-pool 64 64

707 ceph osd pool create block-pool 64 64

708 ceph osd pool application enable block-pool rbd

709 rbd create vdisk1 --size 4G --pool block-pool --image-format 2 --image-feature layering

710 rbd map block-pool/vdisk1

711 mkdir /mnt/vdisk1

712 mount /dev/rbd1 /mnt/vdisk1

713 mkfs.xfs /dev/rbd1

714 mount /dev/rbd1 /mnt/vdisk1

直接搬集群上的操作方式(失败)

[root@ceph-client mnt]# rbd map block-pool/vdisk1

unable to get monitor info from DNS SRV with service name: ceph-mon

In some cases useful info is found in syslog - try "dmesg | tail".

rbd: map failed: 2023-11-14 15:51:13.809 ffff8d4e7010 -1 failed for service _ceph-mon._tcp

(2) No such file or directory

报错连接不上mon

-m指定集群IP

[root@ceph-client mnt]# rbd map block-pool/vdisk1 -m 172.17.163.105,172.17.112.206

rbd: sysfs write failed

2023-11-14 15:55:34.873 ffff79be78c0 -1 monclient(hunting): handle_auth_bad_method server allowed_methods [2] but i only support [2,1]

rbd: couldn't connect to the cluster!

In some cases useful info is found in syslog - try "dmesg | tail".

rbd: map failed: (22) Invalid argument

[root@ceph-client mnt]# rbd map block-pool/vdisk1 -m 172.17.163.105

rbd: sysfs write failed

2023-11-14 15:58:45.753 ffffb09918c0 -1 monclient(hunting): handle_auth_bad_method server allowed_methods [2] but i only support [2,1]

rbd: couldn't connect to the cluster!

In some cases useful info is found in syslog - try "dmesg | tail".

rbd: map failed: (22) Invalid argumen

dmesg日志 no secret set (for auth_x protocol)

[91158.067305] libceph: no secret set (for auth_x protocol)

[91158.068206] libceph: error -22 on auth protocol 2 init

授权

查看pool

查看rbd image

![]()

生成授权

[root@ceph-0 ~]# ceph auth get-or-create client.blockuser mon 'allow r' osd 'allow * pool=block-pool'

[client.blockuser]

key = AQDNLFNlZXSwERAA9uYYz7UdIKmuO1bSiSmEVg==

导出授权

[root@ceph-0 ~]# ceph auth get client.blockuser

exported keyring for client.blockuser

[client.blockuser]

key = AQDNLFNlZXSwERAA9uYYz7UdIKmuO1bSiSmEVg==

caps mon = "allow r"

caps osd = "allow * pool=block-pool"

导出配置文件

[root@ceph-0 ~]# ceph auth get client.blockuser -o /etc/ceph/ceph.client.blockuser.keyring

exported keyring for client.blockuser

测试配置文件(成功)

[root@ceph-0 ~]# ceph --user blockuser -s

cluster:

id: ff72b496-d036-4f1b-b2ad-55358f3c16cb

health: HEALTH_ERR

mon ceph-0 is very low on available space

services:

mon: 4 daemons, quorum ceph-3,ceph-1,ceph-0,ceph-2 (age 30h)

mgr: ceph-0(active, since 3d), standbys: ceph-1, ceph-3, ceph-2

mds: 4 up:standby

osd: 4 osds: 3 up (since 2d), 3 in (since 2d)

rgw: 4 daemons active (ceph-0, ceph-1, ceph-2, ceph-3)

task status:

data:

pools: 5 pools, 192 pgs

objects: 201 objects, 6.4 MiB

usage: 3.2 GiB used, 297 GiB / 300 GiB avail

pgs: 192 active+clean

拷贝配置文件到客户端

[root@ceph-0 ~]# scp /etc/ceph/ceph.client.blockuser.keyring root@ceph-client:/etc/ceph/

在客户端验证一次(需要指定-m参数,成功)

[root@ceph-client ceph]# ceph --user blockuser -s -m ceph-0

cluster:

id: ff72b496-d036-4f1b-b2ad-55358f3c16cb

health: HEALTH_ERR

mon ceph-0 is very low on available space

services:

mon: 4 daemons, quorum ceph-3,ceph-1,ceph-0,ceph-2 (age 30h)

mgr: ceph-0(active, since 3d), standbys: ceph-1, ceph-3, ceph-2

mds: 4 up:standby

osd: 4 osds: 3 up (since 2d), 3 in (since 2d)

rgw: 4 daemons active (ceph-0, ceph-1, ceph-2, ceph-3)

task status:

data:

pools: 5 pools, 192 pgs

objects: 201 objects, 6.4 MiB

usage: 3.2 GiB used, 297 GiB / 300 GiB avail

pgs: 192 active+clean

客户端映射块设备(集群地址)

[root@ceph-client ceph]# rbd map block-pool/vdisk1 --user blockuser -m ceph-0,ceph-1,ceph-2,ceph-3

/dev/rbd0

可以看到成功映射出/dev/rbd0 块设备

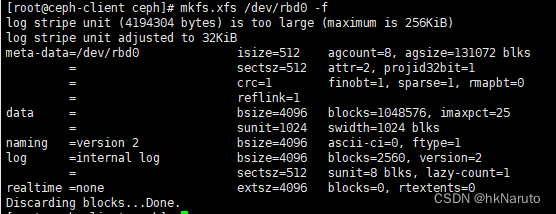

格式化块设备

mkfs.xfs /dev/rbd0 -f

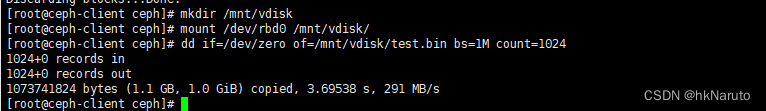

挂载块设备(成功)

参考

更多【java-ceph 14.2.10 aarch64 非集群内 客户端 挂载块设备】相关视频教程:www.yxfzedu.com

相关文章推荐

- 51单片机-51单片机应用从零开始(二) - 其他

- objective-c-【Objective-C】Objective-C汇总 - 其他

- 云原生-SpringCloud微服务:Eureka - 其他

- react.js-react+星火大模型,构建上下文ai问答页面(可扩展) - 其他

- node.js-taro(踩坑) npm run dev:weapp 微信小程序开发者工具预览报错 - 其他

- 云原生-Paas-云原生-容器-编排-持续部署 - 其他

- 网络-漏洞扫描工具的编写 - 其他

- 组合模式-二十三种设计模式全面解析-组合模式与迭代器模式的结合应用:构建灵活可扩展的对象结构 - 其他

- java-【Proteus仿真】【51单片机】多路温度控制系统 - 其他

- java-【Vue 透传Attributes】 - 其他

- github-在gitlab中指定自定义 CI/CD 配置文件 - 其他

- 编程技术-四、Vue3中使用Pinia解构Store - 其他

- apache-Apache Druid连接回收引发的血案 - 其他

- 编程技术-剑指 Offer 06. 从尾到头打印链表 - 其他

- 编程技术-linux查看端口被哪个进程占用 - 其他

- 编程技术-Linux安装java jdk配置环境 方便查询 - 其他

- hdfs-wpf 命令概述 - 其他

- 编程技术-降水短临预报模型trajGRU简介 - 其他

- jvm-函数模板:C++的神奇之处之一 - 其他

- ddos-ChatGPT 宕机?OpenAI 将中断归咎于 DDoS 攻击 - 其他

2):严禁色情、血腥、暴力

3):严禁发布任何形式的广告贴

4):严禁发表关于中国的政治类话题

5):严格遵守中国互联网法律法规

6):有侵权,疑问可发邮件至service@yxfzedu.com